Today’s organizations face major challenges when it comes to securing servers, networks, and devices. Especially as remote working continues to pick up steam, cybersecurity solutions are more vital than ever. Having a proper Endpoint Detection and Response (EDR) solution in place will ensure the protection of both organizations and remote workers alike, and it’s all possible through […]

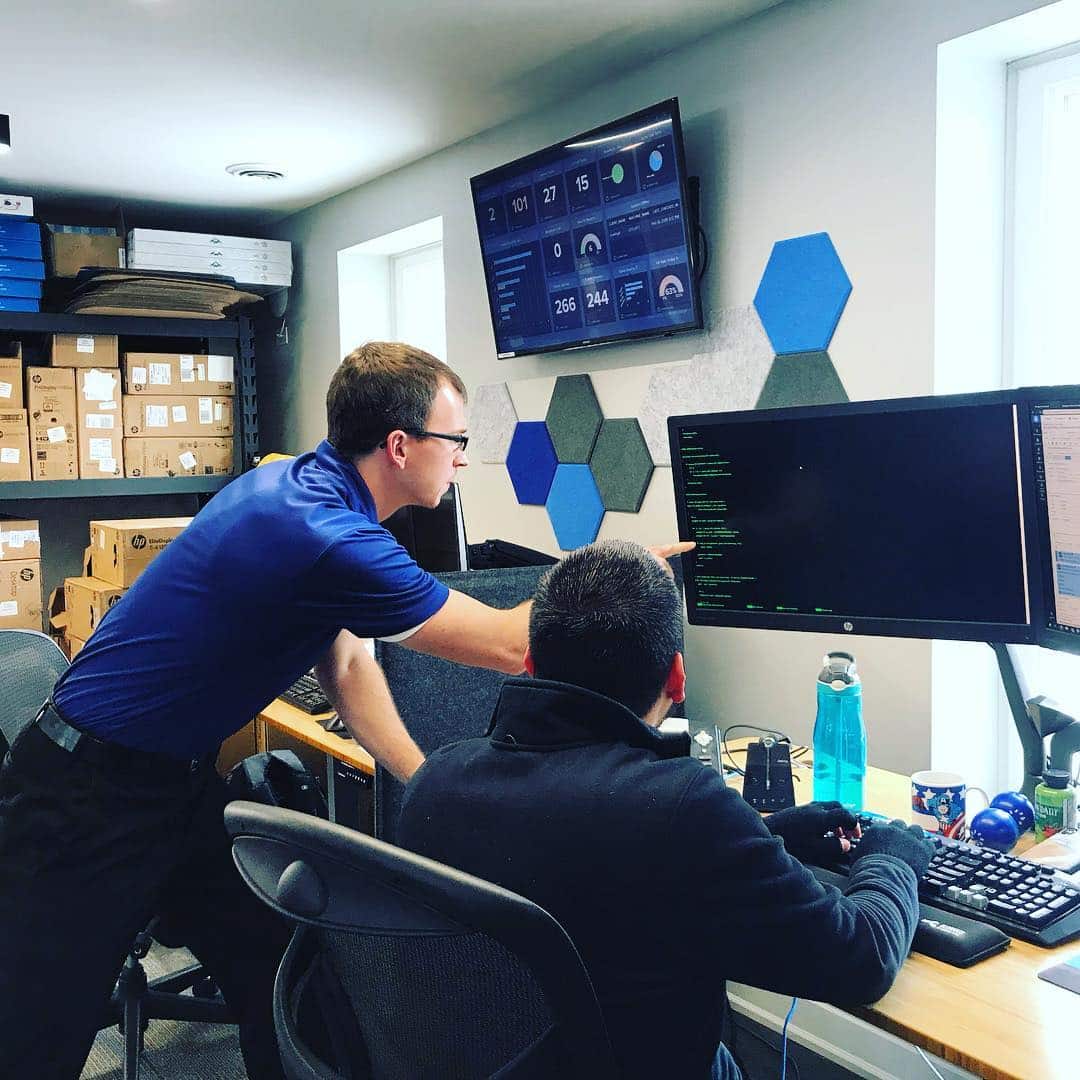

Welcome to Dura-Tech

Protecting Chicagoland Organizations From Cybercrime and Ransomware Together

As previously announced, Dura-Tech has joined with LeadingIt! Starting in 2023 the Dura-Tech branding will be phased out as we unite under the LeadingIT name, including forwarding this website to GoLeadingit.com. Everything you have come to know and love as a client and partner of Dura-Tech remains in tact, with more resources than ever to provide you with the Best Cybersecurity and Fastest Response Times you will find in all of Chicagoland (and soon beyond)!

Existing Clients

Support

Use the same phone number and email address you're used to! Any updates to this process will be directly communicated to you.

Insights from Todd Creek

4 Reasons Endpoint Detection & Response Is Critical

December 15, 20224 Ways To Protect Your Email

December 8, 2022These days, having an email is like having a car. You need one to get yourself from point A to point B. The difference is that a car gets you to physical places while an email helps you accomplish things digitally. Anytime you need to set up an account online, make a purchase, or send […]

The Importance of Cybersecurity Compliance

December 1, 2022Since the emergence of the COVID-19 pandemic, cybersecurity compliance is more important than ever thanks to new industry standards and regulatory requirements. Cyber risk has increased as more people transition to remote work environments, and it’s becoming more apparent that organizations are not well-prepared for remote cyber threats. Now, government organizations aim to mitigate industry […]

Stay Safe During the Holidays by Avoiding these Common Scams

November 16, 2022According to the FBI’s 2021 crime report, Americans lost over $6.9 billion to scams last year, which included $337 million in online shopping and non-delivery scams. During the holiday season, these scams increase ten-fold as bad actors aim to capitalize on things like increased online gift shopping, charity donations, and people searching for seasonal jobs. Many […]

Benefits of Security Scans

November 10, 2022Did you know that 76% of all software has at least one vulnerability? Over the last decade, we’ve seen an increased need for security due to the mass transition to a digital world. With everything kept online, it has become vital to ensure your data is safe and protected. One of the best ways to test […]

3 Bad Habits In Security To Avoid

October 27, 2022In today’s world, cyber hackers are lurking around every corner, and businesses need to be as secure as possible. These hackers will stop at nothing to break through cyber defenses. That is why protecting your business from potential threats is so important. There’s just one problem: many companies have outdated cybersecurity practices and certain bad habits that […]

Cybersecurity Best Practices

October 20, 2022Cyber security is an essential component of IT infrastructure. Companies must implement strong cybersecurity measures due to rising cyber attacks and data breaches. Fortunately, numerous best practices can help organizations prevent cyber threats and reduce the risk of an attack. But what exactly does “best practices in cyber security” mean? As cyber risks have increased and cyber […]

Maintain Optimal Password Security With These 5 Best Practices

October 13, 2022Password security is one of the most important things to be aware of these days. Creating a unique password for each of your online accounts is easier said than done, but it’s essential if you want to protect yourself from cyber threats. Despite growing awareness around online security risks, most people still opt for creating […]

Cybersecurity Is More Than Technology, It’s People Too

October 6, 2022We hear about cyberattacks and data breaches constantly. If you have a pulse, you’re probably aware of the heavy emphasis on cybersecurity’s technical side. However, it is not enough to place sole emphasis on technical solutions to these problems. According to IBM, human error is the root cause of 95% of data breaches. Cybersecurity also requires […]

The Benefits Of Cybersecurity

September 29, 2022Today’s digital world is filled with risks. As businesses become more reliant on cloud services and Internet of Things (IoT) devices, potential internal and external cyber threats, such as ransomware, misused credentials, and data breaches, also increase. However, you can reduce this risk by implementing cybersecurity solutions. An article published by Forbes in 2021 states that Silicon […]

Recent Posts

Want to work with us?

Current Client?

Partnered With the Leaders in Technology

Customer Support | Website by Webfoot | © 2022 Dura-Tech Enterprises, Inc